Linear Algebra

The Hidden Engine of Computer Science and AI

No prerequisites. Just curiosity.

You’re Already Using It

Open Instagram. The face filter that gives you bunny ears? Linear algebra. Ask ChatGPT a question? Linear algebra. Watch a Marvel movie? Linear algebra. Search something on Google? Yes — linear algebra for computer science is everywhere, even when you don’t notice it.

If you are a Computer Science or AI student wondering why your professor keeps insisting on matrices, vectors, and eigenvalues, here is the honest answer: every major technology of the last 30 years runs on linear algebra. It is not just “another math subject” — it is the language computers use to think about data, images, sound, and intelligence itself.

This blog will walk you through what linear algebra actually is, why it matters for CS and AI, and how it shows up in tools you use every single day. We are starting from absolute zero. No prerequisites. Just curiosity. This blog is brought to you by i-Qode Digital Solutions, where our teams turn mathematical foundations like these into real-world AI, cloud, and software solutions.

🌱 Curiosity Callout

The chip in your phone (the GPU) was originally designed to do one thing really well: multiply matrices. That same chip is now the reason ChatGPT, Midjourney, and self-driving cars exist. A piece of math from the 1800s ended up powering the AI revolution of the 2020s.

What Is Linear Algebra, Really?

Linear algebra is the branch of mathematics that deals with vectors (lists of numbers), matrices (grids of numbers), and the operations that transform them. That is it. The reason it is so powerful is that almost anything in the digital world can be represented as a list or grid of numbers.

📘 Beginner Box: Why “Linear”?

The word “linear” means “in a straight line.” In math, it refers to operations that don’t bend or twist things in weird ways — they only stretch, rotate, flip, or shift. This predictability is exactly what makes computers love linear algebra.

The Building Blocks

Before diving into applications, let’s meet the four characters that show up in every linear algebra story.

1. Vectors — Lists with Direction

A vector is just an ordered list of numbers, like [3, 4] or [1, 0, 5, 2]. But there is a twist: each vector also describes a point or an arrow in space.

Real-world analogy: Think of a vector like a delivery address with coordinates. The vector [4, 3] means “go 4 steps east and 3 steps north.” In machine learning, a vector might be [age, salary, years_of_experience] = [25, 50000, 3] — a complete description of a person in three numbers.

2. Matrices — Grids That Do Things

A matrix is a rectangular grid of numbers. You can think of it as a stack of vectors, or as a machine that transforms vectors into other vectors.

Real-world analogy: A grayscale image is literally a matrix. A 1080p photo is a 1920×1080 grid of brightness values. When Instagram applies a filter, it is multiplying your image-matrix by a filter-matrix.

3. Linear Transformations — The “Verbs” of Math

A linear transformation is an action you apply to vectors using a matrix — rotation, scaling, reflection, projection. The beautiful part is that any combination of these actions can be packed into a single matrix and applied in one shot.

Real-world analogy: When you pinch-zoom on your phone, the screen is performing a linear transformation. When a game character turns to look at you, that is a rotation matrix being applied to thousands of points in real time.

4. Eigenvalues and Eigenvectors — The Hidden Skeleton

Every transformation has special directions that do not change when the transformation is applied — they only get stretched or shrunk. These are called eigenvectors, and the stretch factor is the eigenvalue.

Real-world analogy: Think of spinning a globe. Every point on the globe moves — except the two poles. The North-South axis is the “eigenvector” of that rotation. Google Search, Netflix recommendations, and facial recognition all rely on finding the “poles” of huge data matrices.

🌱 Curiosity Callout

The word “eigen” is German for “own” or “characteristic.” Eigenvectors are the directions a matrix considers its own — the ones it doesn’t try to twist around. Once you can find the “eigen” of a system, you usually understand what makes it tick.

🌱 🌱 🌱

Why It Matters: Where You Actually See Linear Algebra

Computers cannot understand pictures, words, or sounds the way humans do. They only understand numbers. Linear algebra is the bridge that turns everything in the world — text, images, audio, video, even friendships on social media — into numbers a computer can crunch quickly.

1. Artificial Intelligence and Machine Learning

Every modern AI system, from spam filters to ChatGPT, is built on linear algebra. A neural network is essentially a long chain of matrix multiplications with some non-linear functions sprinkled in between.

- Neural Networks: When a network “learns,” it is adjusting the numbers inside its weight matrices. A model like GPT has hundreds of billions of these numbers.

- Word Embeddings: Words like “king,” “queen,” “man,” and “woman” become vectors. Famously, the vector math king − man + woman ≈ queen actually works. Language becomes geometry.

- Recommendation Systems: Netflix represents you and every movie as a vector. Your next recommendation is the movie whose vector is closest to yours. This is called the dot product.

- Dimensionality Reduction (PCA): Imagine compressing a 1000-column dataset into just 10 columns without losing the important information. PCA uses eigenvectors to do exactly that.

📘 Beginner Box: Neural Network

A computer program loosely inspired by the brain. It’s made of layers of “neurons” — really just numbers — connected by weights stored in matrices. Training a neural network = adjusting those matrix numbers until the output looks right.

💡 In practice: This is exactly the kind of AI work our teams at i-Qode Digital Solutions deliver — building production-ready machine learning systems where these matrix operations run at scale to power real business outcomes.

2. Computer Graphics, Games, and AR/VR

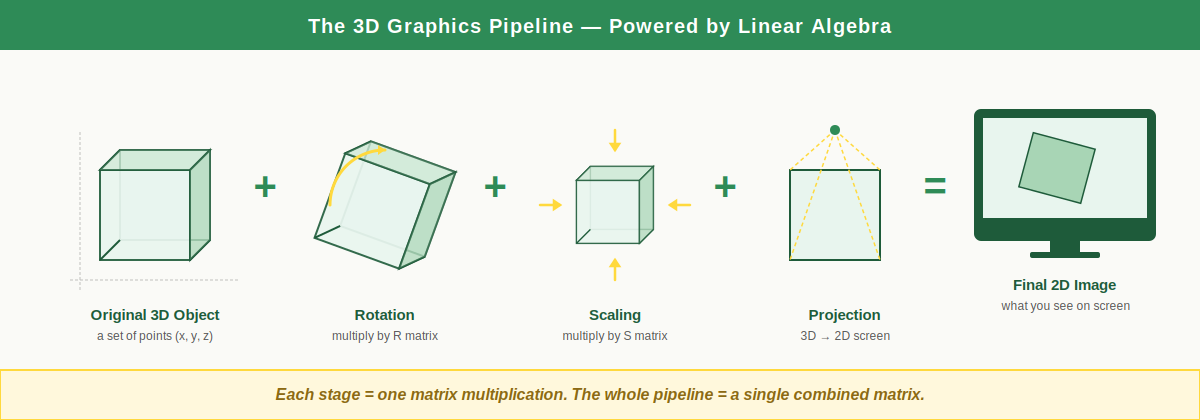

Every 3D game you have ever played — Minecraft, GTA, Valorant, Fortnite — is solving linear algebra problems 60 to 144 times per second to draw what you see on screen.

- Rotation: When your character turns, every point on their 3D model is multiplied by a rotation matrix. A game with 10,000 visible objects performs millions of matrix multiplications per frame.

- Projection: Your monitor is flat (2D), but the game world is 3D. A projection matrix flattens the 3D world onto your screen while preserving the illusion of depth — distant objects look smaller, closer ones look bigger.

- Scaling and Animation: Resizing objects, zooming the camera, and even smooth character animations are all matrix transformations.

Figure 1: The 3D graphics pipeline. Every stage — rotation, scaling, projection — is one matrix multiplication. The full pipeline is just a single combined matrix applied to every point of the 3D object.

3. Computer Vision and Image Processing

Every photo on your phone is a matrix of pixel values. Linear algebra lets computers “see.”

- Filters and Effects: Blur, sharpen, edge detection — all of these are convolutions, a linear algebra operation where a small matrix slides over the image.

- Face Recognition: Apple’s Face ID extracts a vector representing your face and compares it to the stored vector using distance metrics from linear algebra.

- Image Compression: JPEG compression uses a technique called Singular Value Decomposition (SVD), which is pure linear algebra, to shrink file sizes.

4. Search Engines and Networks

- Google PageRank: Larry Page and Sergey Brin built Google on a single linear algebra insight. They modeled the entire web as a giant matrix and used eigenvectors to rank which pages mattered most.

- Social Networks: Instagram and LinkedIn store friendships as adjacency matrices. “People you may know” is computed by multiplying these matrices to find friends-of-friends.

5. Cryptography and Cybersecurity

Modern encryption — including the post-quantum cryptography that will protect us from quantum computers — relies on the difficulty of certain linear algebra problems.

- Lattice-Based Cryptography: These schemes are based on the “shortest vector problem,” which is easy to set up but extremely hard to crack — even for a quantum computer.

6. Quantum Computing

Quantum computing is, mathematically, just linear algebra with complex numbers. Every quantum gate is a matrix, and every quantum state is a vector. If you understand linear algebra, you have already done most of the work needed to learn quantum computing.

🌱 Curiosity Callout

Google’s PageRank algorithm — the one that made Google a trillion-dollar company — is essentially finding the eigenvector of a matrix that represents the entire World Wide Web. One math concept. One trillion dollars. Linear algebra has serious receipts.

🌱 🌱 🌱

A Concrete Example: Rotating a Point

Let us walk through one tiny calculation so you can see linear algebra in action. Don’t worry if you’ve never multiplied a matrix before — we’ll do it step by step.

Suppose we have a point at coordinates (1, 0) and we want to rotate it 90 degrees counter-clockwise around the origin. The rotation matrix for 90° is:

R = [ 0 -1 ]

[ 1 0 ]

To rotate the point [1, 0], we multiply the matrix by the vector:

R × [1] = [ 0×1 + (-1)×0 ] = [ 0 ]

[0] [ 1×1 + 0 ×0 ] [ 1 ]

The point (1, 0) becomes (0, 1) — exactly what a 90° counter-clockwise rotation should produce. Now imagine doing this for the millions of points that make up a 3D character in your favorite game, 60 times per second. That is what your GPU does. That is linear algebra.

🌱 Curiosity Callout

You just did real graphics programming math. The exact same calculation runs inside Unreal Engine, Blender, and every Pixar movie — only with much bigger matrices and many more points. The principle is identical.

Your Learning Roadmap

Convinced? Here is a practical 4-step roadmap that has worked for thousands of students. Don’t try to do everything at once — go in order.

Step 1 — Build Intuition First

Watch the YouTube series “Essence of Linear Algebra” by 3Blue1Brown. It is free, visually beautiful, and will give you a feel for what’s actually happening before you touch any equations. Do this before any textbook.

Step 2 — Learn the Mechanics

Practice matrix multiplication, finding determinants, and computing eigenvalues by hand. Khan Academy and MIT OpenCourseWare (Prof. Gilbert Strang’s course) are the gold standards. Two weeks of consistent practice will take you a long way.

Step 3 — Code It Up

Use Python with NumPy. Try writing a program that rotates a triangle, performs PCA on a dataset, or compresses an image using SVD. Code makes the math stick. You’ll go from “I sort of get it” to “I really get it.”

Step 4 — Apply to a Project

Build a small recommendation system, train a tiny neural network from scratch, or implement a basic image filter. This is where the math becomes a superpower instead of homework.

💡 Looking for real-world experience? Companies like i-Qode Digital Solutions are constantly looking for students who can bridge math fundamentals with practical implementation. Strong linear algebra skills + Python + a portfolio project can open doors to internships and entry-level roles in AI, software development, and data science.

🌱 Curiosity Callout

Pro tip: Don’t just memorize formulas. Always ask: “What is this transformation doing geometrically?” If you can picture it in your head, you understand it. If you can only crunch the numbers, you’re memorizing — not learning.

🌱 WHAT’S NEXT IN FROM ZERO TO CURIOUS

Coming up next in From Zero to Curious: “How Neural Networks Actually Learn — Explained Without the Calculus.” We’ll take what you just learned about matrices and use it to peek inside the brain of an AI. No PhD required. Just bring your curiosity.

Wrapping Up

Linear algebra is not a dusty academic subject — it is the operating system of the modern digital world. It powers the AI models reshaping every industry, the games that entertain billions, the search engines we depend on, and the security protocols that keep our data safe.

For a Computer Science or AI student, learning linear algebra is one of the highest-return investments you can make. It will not just help you pass an exam — it will give you the vocabulary to understand how almost every important technology of your lifetime actually works.

So the next time someone asks why you are studying matrices, smile and say: “Because I am learning the language that computers use to think.”

🌱

That’s it for this edition of From Zero to Curious.

You started at zero. Hopefully, you’re leaving curious.

References & Further Reading

- 3Blue1Brown — Essence of Linear Algebra (YouTube series)

- Gilbert Strang — Introduction to Linear Algebra (textbook and MIT OpenCourseWare lectures)

- Deep Learning by Goodfellow, Bengio, and Courville — Chapter 2

- Khan Academy — Linear Algebra course

- Mathematics for Machine Learning by Deisenroth, Faisal, and Ong

- NumPy and PyTorch documentation — for hands-on practice

About the Author This blog comes from a B.Tech (Mathematics and Computing) student trainee, contributed as part of the From Zero to Curious series at i-Qode Digital Solutions — an IT services and talent solutions company committed to nurturing the next generation of tech talent. Researched, structured, and written for fellow learners who want to understand the why behind the math, not just the what.